Augmented Reality Texture Extraction Experiment

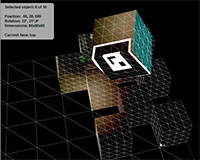

19 Sep 2009This is an AR-based experiment that enables the user to lift textures from real-world objects in live video and apply them onto 3D objects that are overlayed on top of them. Only box primitives are supported here, but the general idea could be extended to other types of 3D primitives or potentially even more complex objects with some clever image compositing and UV mapping.

Click to run demo

Click to run demo

Click the image on the right to run a live version of the experiment. And, print the AR marker (PDF | PNG) and point your webcam at it.

It's running Saqoosha's (feature-incomplete) Alchemy branch of the FLAR Toolkit, along with Papervision3D.

In the future, it would be nice to figure out is how to apply bilinear filtering to the 'deperspectivized' textures. I could also add a feature to export the textured 3d objects into a 3D file format (probably OBJ) if there's any interest.

This piece builds on these two video projection tests, and is a modest implementation of one of the themes from this post of ideas for augmented reality.